RESEARCH TOPICS

How does the brain construct what we see? This overarching question inspires and guides our research. We approach this question by focusing on several overlapping topics.

Computational models of subjective perception

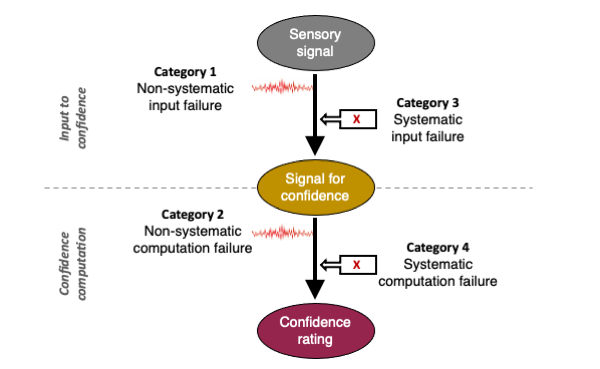

Our lab is best known for our work on understanding how people evaluate their subjective perception. This is often done by presenting ambiguous stimuli and asking subjects to report the confidence in their decisions. We then build computational models of the internal mechanisms used to make these judgments. Going forward, one area of emphasis for us is to use increasingly realistic tasks that mimic the type of tasks that the brain needs to solve in the real world.

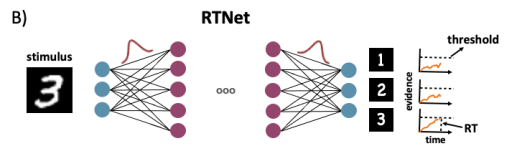

Deep neural networks and human vision

Deep neural networks (DNNs) have recently revolutionized computer vision. These networks are inspired by the architecture and computational principles in the human brain. However, they also diverge from human vision in many ways, most notably in how they are trained. Our lab has numerous projects testing the points of similarity and difference between DNNs and human vision. The objective is to use DNNs to understand better how human vision works, as well as to improve the workings of current DNNs.

Patients with brain-based visual deficits

A new area of research in the lab is testing patients with brain-based visual deficits. These are people who have a brain injury or disease in the visual parts of their brain and can exhibit unusual symptoms, such as not being able to see color, not being able to recognize human faces, or to pay attention to one side of the visual field. The work is done in collaboration with clinicians at Emory.

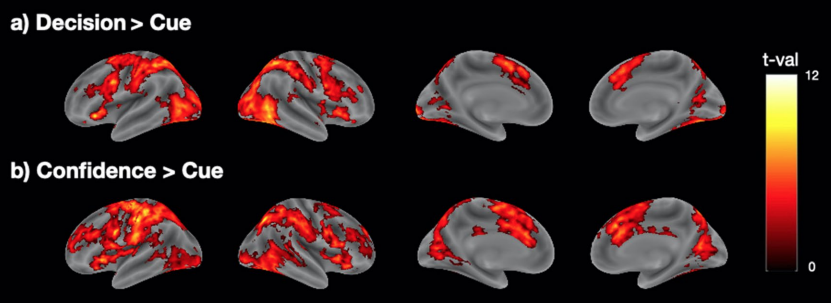

Neural mechanisms of subjective perception

We try to understand the neural bases of subjective perception through the use of functional magnetic resonance imaging (fMRI) and transcranial magnetic stimulation (TMS). We are especially interested in how higher areas of the brain send feedback signals to modulate early sensory processing.